Navigating the AI revolution: how designers can stay competitive Artificial intelligence is changing how we design and the skills required to succeed in the design industry. This article will explore the impact AI has on design and how designers can prepare for the future.

Reality, not HypeAI revolution is not the future, it is happening now. 34% of businesses in the U.S., Europe, and China have already adopted AI, according to Global AI Survey by Morning Consult.

Many organizations like World Economic Forum or IBM recognize AI as the primary technology that will drive the 4th Industrial Revolution. It will fundamentally alter the way we live, work, and relate to one another.

AI can be biased, produce unethical and even dangerous results, and generate incorrect or misleading information. However, it is evolving at an incredible rate and these issues are likely to improve over time.

How generative AI is changing the world2022 saw a big disruption in generative AI: the technology that is able to generate something new rather than analyze something that already exists.

The Generative AI Application Landscape by Sequoia

Ability to give human-like answersGoogle issues a‘ code-red’ alert as ChatGPT from Open AI becomes popular. Instead of googling things, people will be able to ask AI chatbot about anything.

Currently, AI answers are not reliable, but in the future, they may be able to compete with consultants. According to McKinsey, AI could potentially be used in the Risk and Legal industry to answer complex questions, review legal documentation, and draft annual reports. Morgan Stanley, for example, is already working on AI that will be able to advise clients on wealth management.

Customer service will also benefit from AI. Current chatbots may not meet customer expectations, but they might replace more human managers in the future.

Large Language Model concept generated by MidjourneyAbility to generate contentGenerative AI is already being used in marketing and SEO. It speeds up content creation and provides the necessary illustrations.

Jasper, for example, a marketing-focused version of GPT-3, can produce blogs, social media posts, web copy, sales emails, ads, and other types of customer-facing content.

Ability to generate graphicsSuch tools as Microsoft Designer, Runway, DALL-E, and Midjourney make the design more accessible to the general public and accelerate the process of graphics generation.

It is already being used in marketing and design. Nestle used an AI-enhanced version of a Vermeer painting for its marketing campaign. Stitch Fix experiments with DALL-E 2 to visualize the clothing based on customer preferences for color, fabric, and style. Nutella implemented an algorithmic design to create 7 million unique packagings. Advertising agency BBDO is experimenting with producing materials with Stable Diffusion.

Also, a good-looking presentation or a social media post can now be generated without a designer (finally 😉).

Jasper, for example, a marketing-focused version of GPT-3, can produce blogs, social media posts, web copy, sales emails, ads, and other types of customer-facing content.

Ability to generate graphicsSuch tools as Microsoft Designer, Runway, DALL-E, and Midjourney make the design more accessible to the general public and accelerate the process of graphics generation.

It is already being used in marketing and design. Nestle used an AI-enhanced version of a Vermeer painting for its marketing campaign. Stitch Fix experiments with DALL-E 2 to visualize the clothing based on customer preferences for color, fabric, and style. Nutella implemented an algorithmic design to create 7 million unique packagings. Advertising agency BBDO is experimenting with producing materials with Stable Diffusion.

Also, a good-looking presentation or a social media post can now be generated without a designer (finally 😉).

Runway combines a set of AI design tools to produce everything from videos to illustrations

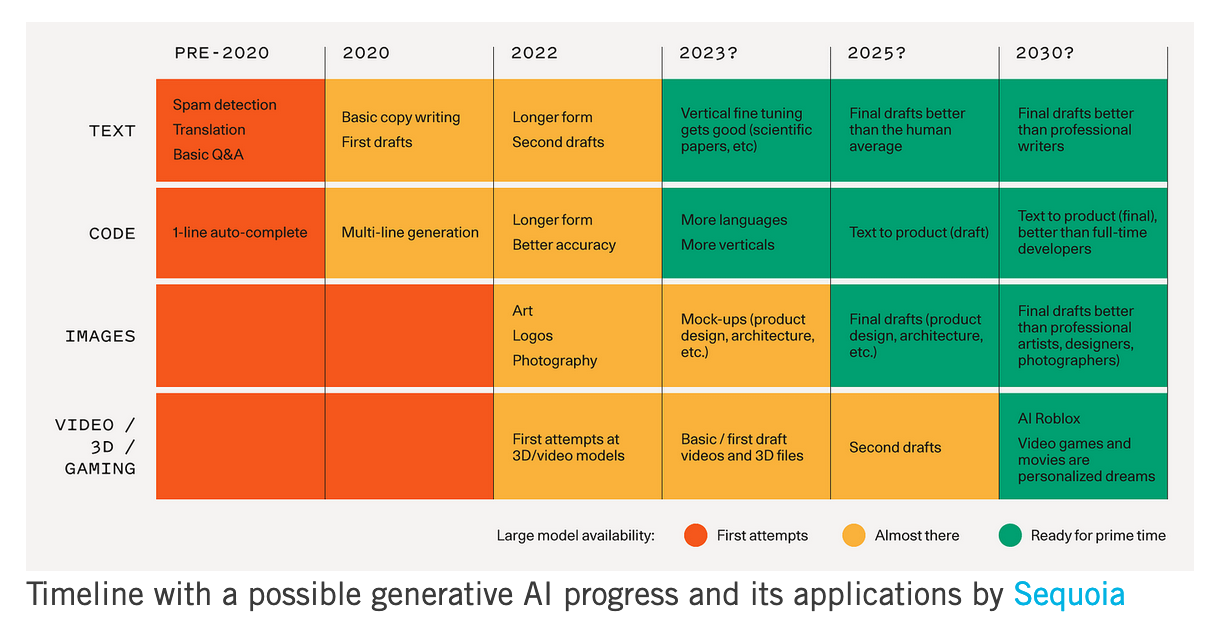

Below is a chart made by Sequoia with a timeline for how they expect to see fundamental models progress and the associated applications:

Timeline with a possible generative AI progress and its applications by Sequoia

Timeline with a possible generative AI progress and its applications by Sequoia

How design will changeUI designAI is already capable of creating a dribble-level UI design. The following images were created by Midjourney.

These are the results of an AI trained on very diverse types of images. What if we create an AI that is trained on UI specifically?

If Midjourney, for example, can get away with taking the whole Artstation for training without the authors’ consent, someone else might decide to train their model on a perfectly labeled collection from Dribbble, Mobbin, Pageflow, or Pttrns.

Will it be able to generate UI directly in Figma? Well, the first experiments are already going on.

If Midjourney, for example, can get away with taking the whole Artstation for training without the authors’ consent, someone else might decide to train their model on a perfectly labeled collection from Dribbble, Mobbin, Pageflow, or Pttrns.

Will it be able to generate UI directly in Figma? Well, the first experiments are already going on.

Airbnb also experiments with generative UI, making design components with production-ready code from hand-drawn wireframe sketches using machine learning and computer vision-enabled AI.

What about generating a whole app from a description? Builder.ai is already exploring this space, creating the draft version of an app with artificial intelligence.

The way we approach the design systems is also likely to change. With the introduction of headless design systems that Figma is already discussing, AI can be trained to generate a standard set of components based on an inspirational image or even a description. What now is a notorious job will be possible to outsource to the machines.

What about generating a whole app from a description? Builder.ai is already exploring this space, creating the draft version of an app with artificial intelligence.

The way we approach the design systems is also likely to change. With the introduction of headless design systems that Figma is already discussing, AI can be trained to generate a standard set of components based on an inspirational image or even a description. What now is a notorious job will be possible to outsource to the machines.

Idea exploration

Generative AI is a powerful sketching tool, that will speed up an exploration process. Designers and non-designers are now able to explore massive numbers of alternative directions in a fraction of the time we needed before.

Generative AI is a powerful sketching tool, that will speed up an exploration process. Designers and non-designers are now able to explore massive numbers of alternative directions in a fraction of the time we needed before.

User research

Generative AI has some implementations in the user research process. For example, we might imagine that AI will be able to assist in creating preparation materials for research or various reports.

But other AI models can move user research to another level by analyzing the research data.

For example, UserTesting is already implementing machine learning to identify the sentiments in the videos of user testing sessions. We don’t know yet, but in the future Large Language Models might be able to generate reports out of these testing videos.

Generative AI has some implementations in the user research process. For example, we might imagine that AI will be able to assist in creating preparation materials for research or various reports.

But other AI models can move user research to another level by analyzing the research data.

For example, UserTesting is already implementing machine learning to identify the sentiments in the videos of user testing sessions. We don’t know yet, but in the future Large Language Models might be able to generate reports out of these testing videos.

AI might also help to analyze large customer data coming from different sources, such as intercom, social media, app reviews, email and etc. As the result, UX designers will be able to get a more reliable picture of user behavior with less effort.

Growth experiments might also change with the introduction of machine learning in the process. Facebook is using ML in the advertisement process: out of many ad variations, it is able to pick the best one and understand when and to whom it is better to show it. In the future, designers might also have a similar tool that will automate testing and speed up growth experiments.

Growth experiments might also change with the introduction of machine learning in the process. Facebook is using ML in the advertisement process: out of many ad variations, it is able to pick the best one and understand when and to whom it is better to show it. In the future, designers might also have a similar tool that will automate testing and speed up growth experiments.

AI might also help to analyze large customer data coming from different sources, such as intercom, social media, app reviews, email and etc. As the result, UX designers will be able to get a more reliable picture of user behavior with less effort.

Growth experiments

might also change with the introduction of machine learning in the process. Facebook is using ML in the advertisement process: out of many ad variations, it is able to pick the best one and understand when and to whom it is better to show it. In the future, designers might also have a similar tool that will automate testing and speed up growth experiments.

Growth experiments

might also change with the introduction of machine learning in the process. Facebook is using ML in the advertisement process: out of many ad variations, it is able to pick the best one and understand when and to whom it is better to show it. In the future, designers might also have a similar tool that will automate testing and speed up growth experiments.

How designers can prepare

The first attempts to introduce AI in the design process are already going on. For example, interior designers are testing InteriorAI for mockup creation. Service designers started using AI as a sketch tool. Product designers are using DALL-E in the brainstorming process.

The first attempts to introduce AI in the design process are already going on. For example, interior designers are testing InteriorAI for mockup creation. Service designers started using AI as a sketch tool. Product designers are using DALL-E in the brainstorming process.

Productivity

AI will dramatically increase designers’ productivity. It will speed up the design process from analyzing user data to making prototypes.

Already now, designers can generate icons, copy, or images for their designs and use AI for visual explorations.

If you need help with the prompts, here are the services that can help you with it: Phraser, Dallelist, Midjourney Prompt Generator, PromptHero, handbook by dallery.gallery.

AI will dramatically increase designers’ productivity. It will speed up the design process from analyzing user data to making prototypes.

Already now, designers can generate icons, copy, or images for their designs and use AI for visual explorations.

If you need help with the prompts, here are the services that can help you with it: Phraser, Dallelist, Midjourney Prompt Generator, PromptHero, handbook by dallery.gallery.

Higher-level design

The increased productivity and the fact that AI might take all the pixel-pushing jobs means that designers have more resources to focus on higher-level issues, such as research, product strategy, growth experiments and etc.

Social intelligence and human-centric designAs AI starts to assist in tasks that require hard skills, designers will have more time for activities that require social intelligence. Challenges like how to bring value and delight to people and how they interact with each other and the system are hard to outsource to AI.

AI might open more opportunities to focus on people and free resources for human-centric design and accessibility work.

The increased productivity and the fact that AI might take all the pixel-pushing jobs means that designers have more resources to focus on higher-level issues, such as research, product strategy, growth experiments and etc.

Social intelligence and human-centric designAs AI starts to assist in tasks that require hard skills, designers will have more time for activities that require social intelligence. Challenges like how to bring value and delight to people and how they interact with each other and the system are hard to outsource to AI.

AI might open more opportunities to focus on people and free resources for human-centric design and accessibility work.

AI will change the way designers work and the skills that are in demand in the job market. It is clear now that most of the pixel-pushing and routine design work will be automated. It is not clear yet what else will be automated as well. Designers will need to adapt and learn how to work with AI.

These are just the first days of AI and the speed of current breakthroughs has no historical precedent. Even the possibility of General Intelligence that can perform any human intellectual task might not be so distant as we now imagine.

New challenges for designers

As technology advances, new challenges for designers might emerge.

When we start implementing customer-facing AI more widely, designers should be responsible to make sure the outcomes are free of bias and produce ethical and valuable results.

Metaverse will also bring new interaction design challenges never seen before. Unlike UI design, virtual reality has few established interaction patterns and many things are yet to be figured out.

These are just the first days of AI and the speed of current breakthroughs has no historical precedent. Even the possibility of General Intelligence that can perform any human intellectual task might not be so distant as we now imagine.

New challenges for designers

As technology advances, new challenges for designers might emerge.

When we start implementing customer-facing AI more widely, designers should be responsible to make sure the outcomes are free of bias and produce ethical and valuable results.

Metaverse will also bring new interaction design challenges never seen before. Unlike UI design, virtual reality has few established interaction patterns and many things are yet to be figured out.

AI will change the way designers work and the skills that are in demand in the job market. It is clear now that most of the pixel-pushing and routine design work will be automated. It is not clear yet what else will be automated as well. Designers will need to adapt and learn how to work with AI.

These are just the first days of AI and the speed of current breakthroughs has no historical precedent. Even the possibility of General Intelligence that can perform any human intellectual task might not be so distant as we now imagine.

These are just the first days of AI and the speed of current breakthroughs has no historical precedent. Even the possibility of General Intelligence that can perform any human intellectual task might not be so distant as we now imagine.